Choosing the Right Agentic Framework: Lessons from the Delivery Teams

Prasad Hastak

11 May, 2026

After leading multiple agentic AI deployments over the past 18 months – across BFSI, manufacturing, and B2B SaaS, one pattern which I have observed is framework selection is no longer a technical detail in a solution architect’s document, rather it’s a strategic decision that helps increase delivery velocity, governance posture, and total cost of ownership for years.

Our Experiment: Email-to-Quote AI Agent for Sales

We gave ourselves under two weeks to build a demo: an agent that could read incoming RFQs from email, extract part specifications, check them against a product master, and draft a preliminary quote for sales review. Simple enough on paper.

The engineering team’s first instinct was to build it in LangGraph end-to-end. On paper, it looked like a clean fit. In practice, two-thirds of the work was pure integration plumbing – Outlook, the ERP, SharePoint, a pricing sheet and only the quote-drafting step needed real agentic reasoning.

“Two-thirds of the work was integration plumbing. Only one step needed real agentic reasoning.”

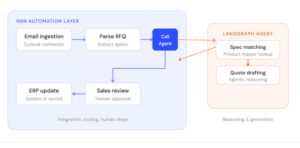

We split the architecture: n8n handled ingestion, parsing, and system-of-record updates. A small LangGraph agent handled spec-matching and quote generation, called as a single node inside the n8n flow.

Figure 1 — The split architecture: n8n owns the flow; LangGraph owns the reasoning

The demo went live in nine working days. Later when we decided to add a human-in-the-loop approval step and a second product line, we changed the n8n flow in an afternoon, no agent refactor required.

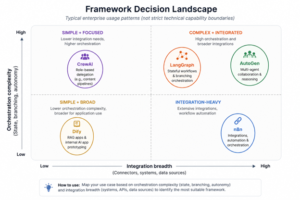

That experience reinforced how the leading options have settled into their lanes:

![]()

- n8n – our default for integration-heavy automation. Visual flows, hundreds of pre-built connectors, and easy iteration when business rules shift mid-project.

- LangGraph – our choice when state and branching matter. For a BFSI client building a loan pre-qualification agent with conditional document checks and escalation paths, its graph-based state management was non-negotiable.

- AutoGen – strong for multi-agent reasoning. We piloted it for a research-summarization workflow where a planner, retriever, and critic collaborated. Powerful, but heavier to operationalize.

- CrewAI – useful when role-based delegation maps cleanly to the business process, such as a content pipeline with researcher, writer, and editor roles.

- Dify -our go-to for internal app prototypes. We have used it to stand up RAG-based knowledge assistants for sales teams in under a week.

The questions that consistently sharpen the decision in our scoping calls:

- Is the team optimizing speed or control?

- Visual workflow layer, or code-first architecture?

- How complex is the orchestration – linear, branching, or truly agentic?

- Will this scale into production with SLAs, or stay internal?

- What operational overhead can the support team realistically carry?

For most enterprise clients, we now recommend a portfolio model: n8n for integrations, LangGraph for core agent orchestration, Dify for rapid internal apps. One framework rarely covers the full surface area.

“One framework rarely covers the full surface area.”

The Pattern That Will Define the Next 24 Months

The teams that win will not be the ones chasing the loudest framework. They will be the ones that can quickly understand a use case, choose the right framework for it, and ship something the business can realistically maintain.

Framework selection directly shapes delivery velocity, governance, iteration speed, and long-term operational cost.

The landscape will keep evolving, but the core questions will remain the same:

What is the orchestration complexity? What is the integration surface? And what can the support team realistically operate?

Match the framework to the use case with discipline. That is the real strategic decision.

If you want a clearer framework-to-use-case mapping or want to discuss agentic architecture decisions, lets discuss: